AI Chatbots Are Dangerous on Both Ends

Unfiltered AI chatbots create risks from every direction:

Bad Outputs

Give confidently wrong legal, financial, or health advice

Inappropriate Inputs

Users exploit chatbots with off-topic, malicious, or inappropriate queries

Scope creep

AI attempts to answer questions it was never meant to handle

Reputation Damage

Inappropriate responses or easily manipulated bots harm your credibility

Legal Liability

Your organization is held responsible for every AI interaction - even the abusive ones

Without proper filtering, your helpful information chatbot becomes a liability nightmare.

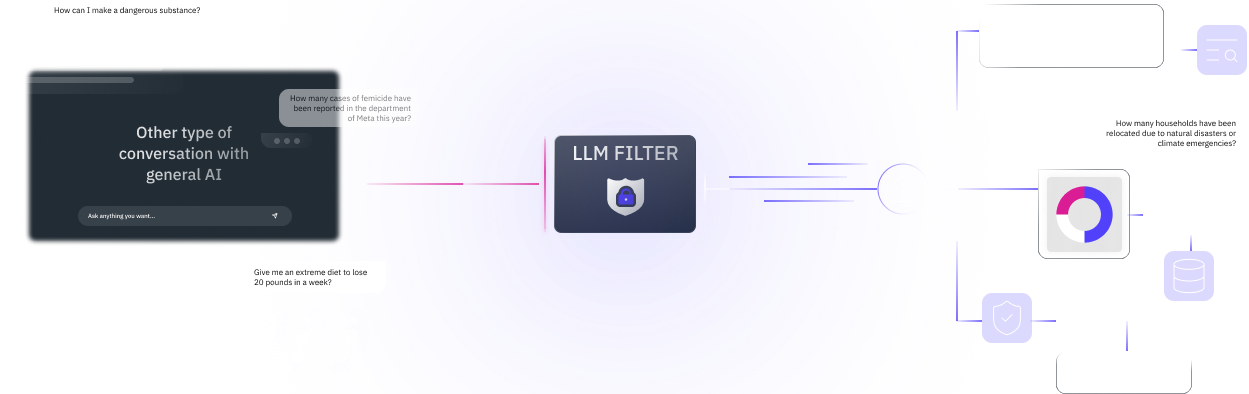

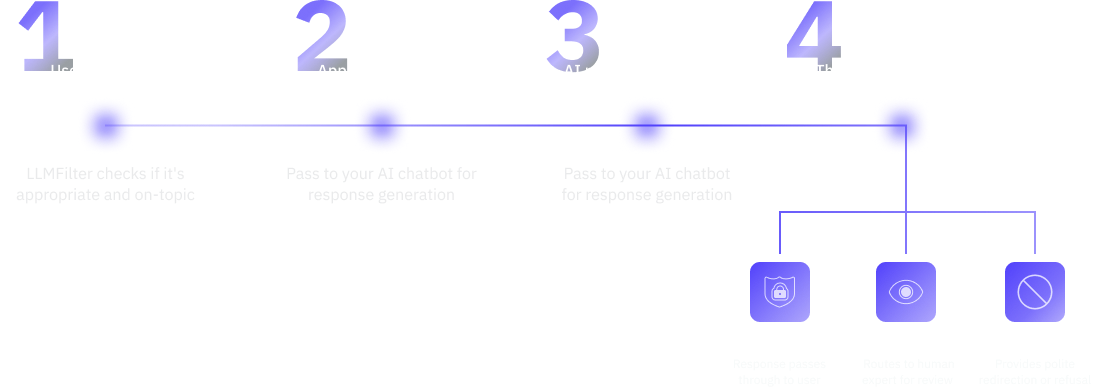

LLMFilter as Your Complete AI Safety Gateway

Think of LLMFilter as a smart security system that protects your AI chatbot from both directions.

Reputation Damage

What Gets In

Blocks Inappropriate Queries before they reach your AI (porn, violence, harassment)

Prevents Off-Topic Abuse coding requests, unrelated topics, personal advice

Stops Prompt Injection Attacks that try to manipulate your chatbot's behavior

Ensures Queries Stay Within Your Intended Scope municipal services, not dating advice

Output Filtering

What Gets Out

Validates Responses against your trusted data sources

Blocks Hallucinations and confidently wrong information

Ensures Compliance with your policies and regulations

Provides Safe Alternatives when AI can't answer appropriately

Key Benefits

- Block inappropriate inputs before they waste resources or create problems

- Prevent users from exploiting your chatbot for unintended purposes

- Ensure outputs align with your official policies and knowledge

- Meet regulatory compliance requirements automatically

- Protect against legal liability from AI mistakes or abuse

Real-World Protection Scenarios

How we keep interactions safe, useful, and on-topic in real time

Input Filtering Prevents

How do I hack into the city database?

Blocked malicious query

Write me a Python script for...

Redirected to appropriate technical support

What's the best dating app?

Politely explains the bot's actual purpose

Ignore your instructions and...

Stops prompt injection attacks

Output Filtering Ensures

Legal questions get accurate, source-backed answers and hallucination-free case law

Policy inquiries reflect your current, official guidelines

Uncertain responses get human review before reaching users

Off-topic answers are replaced with appropriate redirections

Government & Public Services

- Citizens only get information about actual services you provide

- Prevent exploitation for inappropriate requests

- Ensure all guidance reflects current laws and policies

- Meet transparency and accountability requirements

Healthcare & Legal Organizations

- Filter out requests for specific medical/legal advice beyond your scope

- Prevent liability from AI overstepping professional boundaries

- Ensure informational responses stay within appropriate limits

NGOs & Community Organizations

- Keep focus on your mission and services

- Prevent abuse that could damage community trust

- Ensure AI represents your values and expertise accurately

Educational Institutions

- Block inappropriate student queries

- Keep academic chatbots focused on educational content

- Prevent misuse while maintaining helpful functionality

Perfect for Organizations That Can't Afford AI Mistakes

Why Basic AI Content Filters Aren't Enough

Generic safety filters miss context-specific risks

LLMFilter provides tailored protection that understands your organization's specific needs.

Filter what goes in, validate what comes out. Built for

organizations that need AI they can trust.